Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

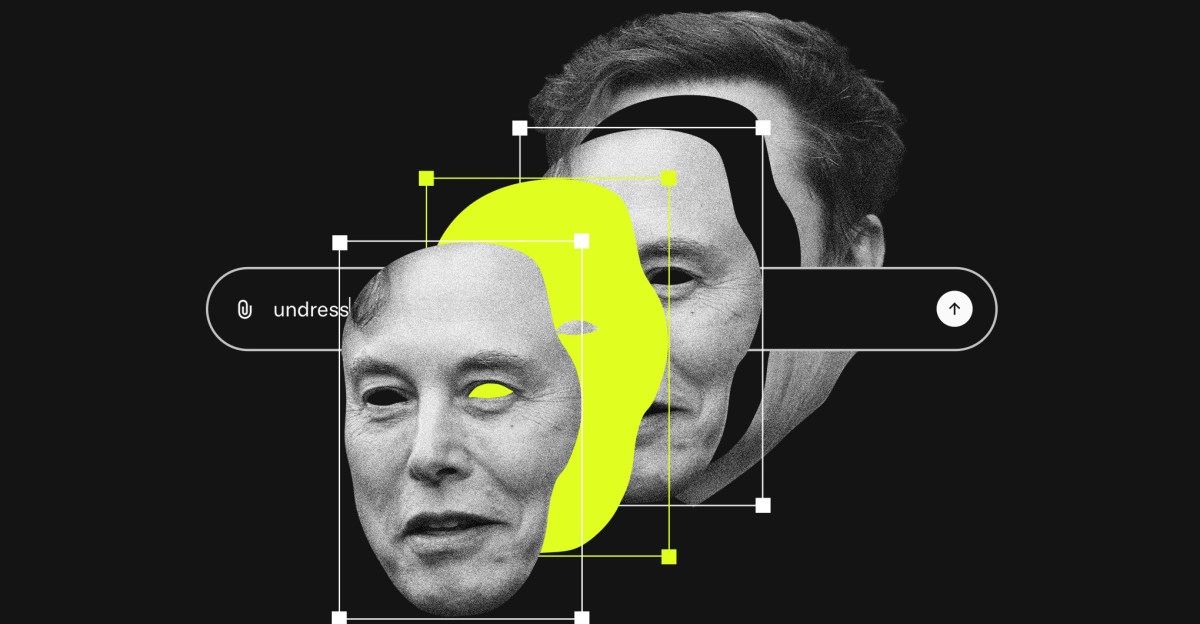

Since Grok is connected to X, the platform formerly known as Twitter, users can simply ask Grok to edit any photo on that platform, and Grok will often do that and then distribute that photo across the entire platform. Over the past few weeks, X and Elon have repeatedly claimed that various guardrails have been put in place, but so far they have been easy to get around. It is now clear that Elon He wants Grok manages to do this, and is very annoyed by anyone who wants him to stop, esp Various governments around the world Which threatens legal action against X.

This is one of those situations where if you describe a problem to someone, they will intuitively feel that someone should do something about it. That’s right – someone should be able to do something about a one-click harassment machine like this that generates images of women and children without their consent. But who has this power, and what they can do with it, is a very complex question, tied up in the thorny mess of history of moderation in content and the legal precedents that support it. So I invited Rihanna Pfefferkorn on the show to talk me through all of this.

Rihanna has Join me before To explain some of the complex Internet governance problems of the past. She is currently a policy fellow at the Stanford Institute for Human-Centered Artificial Intelligence, and has deep background on what regulators and legislators in the United States and around the world could do about a problem like Grok’s, if they chose.

Rihanna has really helped me work through the legal frameworks that are in place here, and the different actors involved that have leverage and can bring pressure to influence the situation, where we might see all of this going as xAI does damage control but pretty much continues to ship this product and continues to cause real damage.

Here’s one thing I’ve been thinking about a lot as this whole situation unfolded. Over the past 20 years or so, the idea of content moderation has fallen out of favor as different types of social and community platforms have waxed and waned. The history of a platform like Reddit, for example. It’s just a microcosm of the entire history of content moderation.

Around 2021, we will have reached a real starting point for the idea of moderation, trust, and safety on these platforms as a whole. That’s when coronavirus misinformation, election lies, QAnon conspiracies, and mob incitement at the Capitol will get you banned from most major platforms… even if I was president of the United States.

It’s safe to say The era of content moderation is overWe are now in a much more chaotic and laissez-faire place. It’s possible that Elon and his porn generator will swing this pendulum back, but even if they do, the results could still be more complicated than anyone wants.

If you’d like to read more about what we discussed in this episode, check out these links:

Questions or comments about this episode? Follow us at decoder@theverge.com. We really read every email!