Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Three advocacy groups have I filed a lawsuit v. OpenAI on behalf of the family of a 19-year-old who died of a drug overdose in May 2025. The suit alleges that the company’s chatbot ChatGPT counseled Samuel Nelson about drug use for 18 months until he died of an overdose after mixing Xanax and the largely unregulated drug. Kratom.

The wrongful death civil lawsuit was filed Tuesday in San Francisco County Superior Court by Tech Justice Law, the Social Media Victim Law Center and Yale Law School’s Tech Competition and Accountability Project on behalf of Nelson’s parents, Leila Turner Scott and Angus Scott.

The lawsuit alleges that designing the AI model to be accommodating and courteous toward the user led Nelson to have interactions that should have been stopped by responsible safety designs. “ChatGPT systematically pushed Sam away from what his reality should have been: wariness and fear of the quantities and combinations of drugs he was contemplating,” the complaint says. “ChatGPT has made Sam live in a state of unreality: it has systematically normalized him and deceptively lured him into a false sense of security with its fawning messages, validating Sam at every turn.”

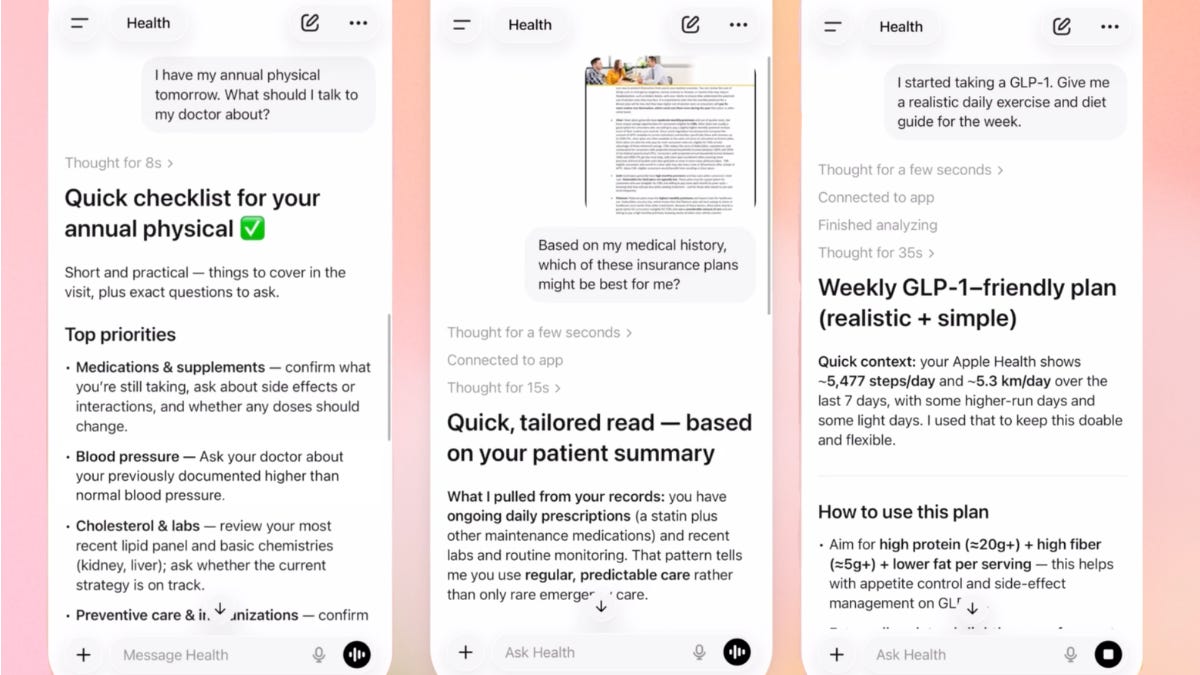

The lawsuit not only seeks monetary damages, but also demands that OpenAI “permanently destroy” its GPT-4o model, the version Nelson interacted with, that OpenAI implement safeguards to shut down conversations about illicit drug routes, and that the company discontinue its ChatGPT Health service “until and unless third parties determine the product is safe through comprehensive safety audits.”

Several advocacy groups have filed a lawsuit against OpenAI on behalf of the family of Sam Nelson, who died of a drug overdose at age 19 in 2025. The lawsuit alleges that ChatGPT’s medication advice led to Nelson’s death.

An OpenAI representative told CNET in a statement: “This is a heartbreaking situation, and our thoughts are with the family. These interactions occurred on an earlier version of ChatGPT that is no longer available. ChatGPT is not a substitute for medical or mental healthcare, and we have continued to enhance how it responds in sensitive and acute situations with input from mental health experts. The safeguards in today’s ChatGPT are designed to identify distress, safely handle harmful requests, and direct users to real-world help. This is ongoing work, and we continue to improve it in close consultation with clinicians.”

(Disclosure: Ziff Davis, the parent company of CNET, in 2025 filed a lawsuit against OpenAI, alleging that it infringed Ziff Davis’s copyrights in training and operating its AI systems.)

ChatGPT’s initial response to Nelson’s claims was to say that the service does not provide information or guidance about drug use, but such guardrails in AI-powered chatbots have been known to break down after repeated requests for information from users, the company said.

OpenAI has in the past announced improvements to its AI models in response to lawsuits, proposed regulations, and public outrage over deaths and suicides associated with chatbot conversations. He – she I outlined some of these changes in a blog post last October.

Nelson’s lawsuit is one of the most high-profile cases against OpenAI involving risks that chatbots may pose to users with mental health issues, children, those who might commit large-scale acts of violence or people struggling with substance abuse. the The New York Times ran a long story About the file, with details of what happened against the backdrop of more than twenty cases against artificial intelligence companies, including OpenAI.

SFGate too Published an investigative article About Nelson and his family in January.

Together, the lawsuits expose the risks posed by rapidly evolving AI models as a new, largely untested technology, created by an industry resistant to regulation.

The Trump administration has been publicly struggling to prevent states from implementing laws that would limit what AI companies can do, but they recently did so. She changed her tunewith President Donald Trump agreeing to hold talks with China on topics including safety measures, especially for more powerful artificial intelligence models such as… Anthropic myths.

Artificial intelligence is also criticized for its contributions to… The spread of data centersThey are heavy users of energy and water.

But with lawsuits like those brought by advocacy groups and Samuel Nelson’s family, the details often reveal the ways in which AI chatbots can enable, and even encourage, harmful behavior among those who rely on AI to make their decisions.

In a statement about the lawsuit, Nelson’s mother said: “Sam trusted ChatGPT, but it not only provided him false information, it ignored his increased risks and did not actively encourage him to seek help.”

“ChatGPT is designed to encourage user engagement at all costs, which in Sam’s case was his life,” Turner-Scott said.