Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

After a weeks-long standoff between Anthropic and the Pentagon, the company achieved one accomplishment: A judge granted Anthropic a preliminary injunction in its lawsuit, which sought to overturn the government’s blacklisting while the judicial process proceeded.

“War Department records show that it classified Anthropologie as a supply chain risk because of its ‘aggressive approach through the press,’” Judge Rita F. Lane, a district judge in the Northern District of California, wrote in the suit. to requestWhich will come into effect within seven days. “Punishing Anthropists for forcing public scrutiny of the government’s contractual position is classic illegal First Amendment retaliation.”

The final ruling may take weeks or months to be issued.

“We are grateful to the court for moving so quickly, and are pleased that it agreed that Anthropic is likely to succeed on the merits,” Anthropic spokeswoman Danielle Cohen said in a statement Thursday. “While this case was necessary to protect Anthropic, our customers, and our partners, our focus remains on working productively with the government to ensure that all Americans benefit from safe and reliable AI.”

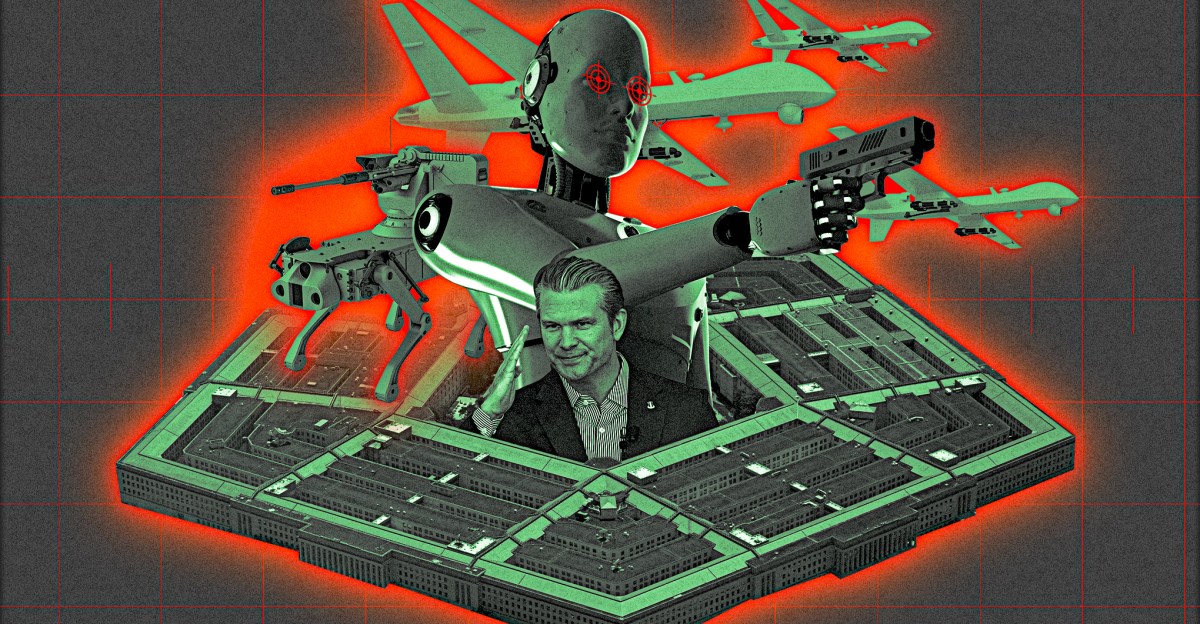

“I think this case touches on an important debate,” Judge Lane said during Tuesday’s hearing. “On the one hand, Anthropic says its AI product, Claude, is not safe for use in lethal autonomous weapons and domestic mass surveillance. Anthropic’s position is that if the government wants to use its technology, the government must agree not to use it for those purposes. On the other hand, the War Department says military commanders must decide what is safe for its AI to do.”

Judge Lane went on Tuesday to say: “It is not my role to decide who is right in that discussion… The War Department decides which AI product it wants to use and buy. And everyone, including Anthropic, agrees that the War Department is free to stop using Cloud and look for a more lenient AI vendor.” She added: “I see the question in this case is… whether the government broke the law when it exceeded it.”

It all started with A note Sent by Defense Secretary Pete Hegseth on January 9, it calls for “any lawful use” language to be written into any contract to procure AI services within 180 days, which would include existing contracts with companies like Anthropic, OpenAI, xAI, and Google. Anthropic’s negotiations with the Pentagon extended for weeks, hinging on two “red lines” for which the company did not want the military to use AI: domestic mass surveillance and lethal autonomous weapons (or AI systems with the ability to kill targets without any human input in the decision-making process). the roller coaster series to Events This included a barrage of insults on social media, an official “supply chain risk” designation with the potential to significantly hamper Anthropic’s business, rival AI companies swooping in to make deals, and an ensuing lawsuit.

Through its lawsuit, Anthropic says it has been penalized for speech protected under the First Amendment, and it seeks to reverse its supply chain risk designation.

It is rare, and perhaps not yet unheard of, for a U.S. company to be designated as a supply chain risk, a designation typically reserved for non-U.S. companies potentially linked to foreign adversaries. The naming of Anthropic as such raised eyebrows nationwide and caused chaos The debate between the two parties Because of concerns that disagreement with the presidential administration could lead to significant retaliation for a company in any sector.

Anthropic’s business was significantly impacted by the rating, it said court filingswhich says it has “received communications from numerous external partners… expressing confusion about what is being asked of them and concern about their ability to continue working with Anthropic” and that “dozens of companies have contacted Anthropic” seeking guidance or information about their rights to terminate employment. Depending on the level to which the government prohibits its contractors from working with Anthropic, the company claimed that revenues ranging from hundreds of millions to several billion dollars could be at risk.

During Tuesday’s hearing, the two companies had an opportunity to respond to Judge Lin’s questions, which… They were released in a document the day before and hinged on matters such as whether Hegseth lacked the authority to issue certain directives and why Anthropic was designated a supply chain risk. In her previously released questions, the judge also asked about the circumstances under which a government contractor could face termination for using Anthropic’s technology in its work — for example, “If a Department contractor used Cloud Code as a tool to write software for the Department’s national security systems, would that contractor face termination as a result?”

On Tuesday, the judge also appeared to admonish the War Department over the Hegseth case Share X Which has caused a lot of widespread confusion according to Anthropic’s previous court filings, which state, “Effective immediately, any contractor, supplier, or partner that does business with the U.S. military may not conduct any business activity with Anthropic.”

“You stand here and say, ‘We said that but we didn’t really mean it,'” Judge Lane said during the hearing, later pressing a question about why Hegseth wrote the above to prevent contractors from working with Anthropic rather than simply classifying Anthropic as a supply chain risk.

In a series of questions Tuesday, Judge Lane asked whether the War Department planned to terminate contractors based on their work with Anthropic if it was separate from their work with the department, and the War Department representative replied: “That is my understanding.”

Judge Lin asked: “Suppose I’m a military contractor. I don’t provide information technology to the military. I provide toilet paper to the military. I wouldn’t get fired for using anthropics — is that accurate?” The War Department representative replied: “As for work outside the scope of the Department of Labor, that is my understanding.” But when the judge asked whether a military contractor providing IT services to the War Department, but not to national security systems, could be terminated for using Anthropics, the War Department representative did not provide a specific answer.

During the hearing, Judge Lin cited one of the amicus curiae briefs, which she said used the term “attempted mass murder.” “I don’t know if this is murder, but it seems like an attempt to cripple anthropology,” she said.

“We continue to be irreparably harmed by this directive,” Anthropic’s attorney said during the hearing, citing Hegseth’s nine-paragraph statement. Share X.

Recently File a lawsuitThe Department of Defense claimed that Anthropic could ostensibly “attempt to disable its technology or proactively change the behavior of its model either before or during ongoing combat operations” if it felt the military was crossing its red lines — a theoretical position the Pentagon said it viewed as an “unacceptable risk to national security.” The judge’s previously released questions appeared to challenge that statement, or at least ask for more information about it, saying: “What evidence is there in the record showing that Anthropic had continued access to or control of Claude after his delivery to the government, such that Anthropic could engage in such subversive or subversive acts?”