Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

In early January, a group of 90 or so political, community, and thought leaders gathered at the New Orleans Marriott Hotel for a secret conference on artificial intelligence—so secret, in fact, that no one knew who had been invited until they entered the room. Church leaders and conservative academics were sitting next to trade union representatives. Suddenly the progressive power brokers who molded Bernie Sanders to run for president find themselves breathing the same air as MAGA-talking presidents. The AI leaders who invited them to New Orleans hoped that none of them would kill each other.

On Wednesday, the Future of Life Institute, one of the most authoritative voices in the world of AI safety, released the results of that meeting: Pro-human AI announcementa brief document containing five guiding principles on how AI development should focus humanity first, with a clear focus on avoiding the concentration of power in the hands of the powerful; Maintaining the well-being of children, families and communities; Preserving human effectiveness and freedom. It has the widest range of signatories I have ever personally seen on a single political document.

Powerful civic organizations outside the tech world signed the declaration: major unions like the AFL-CIO, the American Federation of Teachers, and the Screen Writers Guild; Religious organizations such as the G20 Interfaith Forum and the Conference of Christian Leaders; the Progressive Democrats of America, the group that drafted Bernie Sanders to run as a Democrat in 2016; Think tanks like the conservative Institute for Family Studies and advocacy groups like Parents RISE!.

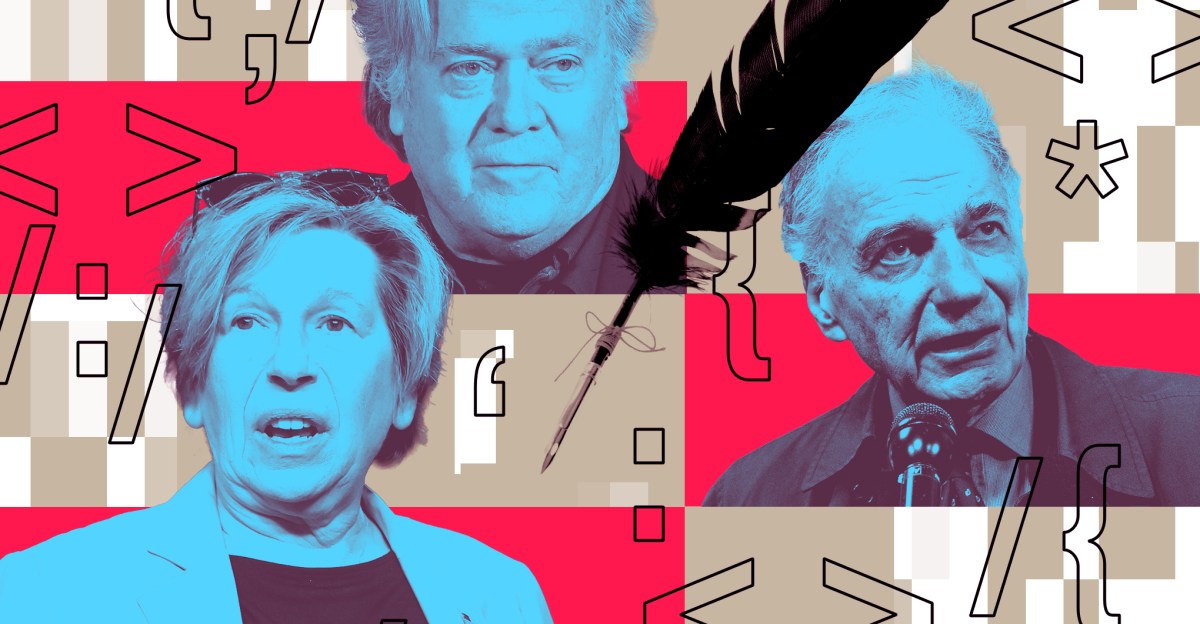

Individual signatories range even further: Democratic presidential candidate Ralph Nader, AFT President Randi Weingarten, Signal Foundation President Meredith Whitaker, The fire glenn beck, War room Steve Bannon, Virgin Group founder Sir Richard Branson, former National Security Advisor Susan Rice, members of SAG-AFTRA, leaders of major evangelical organizations. More are expected to register in the next few days.

The meeting was held under Chatham House rules and the list of attendees remains private. But the participants who agreed to talk to them Edge About the experiment, they said they were invited by Max Tegmark, co-founder of FLI and an MIT professor who was assigned to TIME 100 Amnesty International list. “We’ve spent a lot of time talking to him over the past few months,” said Weingarten, a strong supporter of the teachers union. Edge In a phone interview. Although she couldn’t make it to New Orleans, she was involved in drafting the document, and found notable similarities in FLI’s worldview and the AFT’s “common sense guardrails” for using AI in schools. “We’ve been on parallel paths for so long without even knowing it.”

Joe Allen, co-founder of Humans First and former correspondent on Bannon’s show War roomHe said Edge Tegmark also invited him to New Orleans, as well as an earlier proof-of-concept meeting in Manhattan. Although the wide range of attendees was conflicted and the political tensions had not completely disappeared, Allen was surprised by how quickly they all agreed on similar themes: lethal autonomous weapons should not be powered solely by artificial intelligence. AI companies should not take advantage of children’s emotional connection for profit. Amnesty International should not be granted legal personality. (The least popular position in the ad was still approved by 94% of attendees.)

“I think of it as if there was knowledge that there were toxins in the water supply, or that drugs were flooding schools — anything like that, in general — most people would be against it and it’s not partisan,” he said. AI was a bit more problematic in people’s general opinion of specific models of AI divided along partisan lines – Grock was the “grounded” AI and Anthropic was the “woke” AI – but for Allen, the distinction was meaningless. “For example, what do ‘grounded’ and ‘woke’ mean at this point?”

“We won’t have the luxury of discussing all those other issues if we can’t get this right. “So, let’s get this right.”

Nearly a decade ago, FLI laid out a more optimistic set of principles for AI research — 23 principles, to be precise, that were written during Asilomar 2017 useful artificial intelligence conferencewhich attracted more than 100 of today’s tech stars. Signatories and supporters of the Asilomar AI Principles included AI leaders such as Sam Altman, Elon Musk, and Demis Hannabis; Prominent figures such as Stephen Hawking and Ray Kurzweil, and representatives of major companies such as Google, Intel and Apple.

But this time, no one from industry was invited, let alone people of the caliber of Altman and Musk. “This was actually a very deliberate design choice,” said Emilia Javorsky, director of the Futures Program at FLI. Edge. The more she attended conferences and events about the impact of AI on society, the more she noticed that corporate interests would eventually become the dominant perspective in the room, “just because of the nature of their size, weight and funding capabilities.” Instead, the invitees were from civil society organizations, all of which were experiencing widespread disruption from AI, and all of whom were tired of their concerns being ignored by big tech companies.

Anthony Aguirre, FLI’s other co-founder and a distinguished professor of cosmology at UC Santa Cruz, emphasized that this announcement was not their attempt to bring back the Asilomar principles, but a grim acknowledgment of a dark new reality — one where their former colleagues were now heads of major corporations, trying to achieve artificial general intelligence before their competitors, and satisfying shareholders before tackling safety. The power to direct the development of artificial intelligence has increasingly been concentrated in the hands of a few people, and the Trump administration’s deregulation has further empowered them. “Other than the total mass of humanity, there was one entity that would have real control over what they could do, and that was the United States government,” he said. Edge. “Now that you have their backs and want to keep them unfettered, the only thing that poses a real threat is other companies.”

“If the government does not do it, the people must force the government to do it.”

In the absence of big tech companies and public scrutiny, there was something unique about how quickly this group coalesced around the same issues and reached the same conclusions, Javorsky said. Over the next few days, Javorsky kept hearing the same phrase: “We won’t have the luxury of discussing all these other issues if we don’t get this right. So, let’s get this right.”

In Weingarten’s view, the declaration was both a mission statement for what she called the “Demanding Main Coalition”—a strategic alliance of political opponents—and a way to keep all their efforts coordinated against a government that elevates the establishment above society. “What’s really important is that there are other people who have said, Let’s try to create a larger coalition to say that we need humanity to be at the center of AI,“The AFT might have been able to push the issue of child safety on their own, but there was only so much pressure they could put on lawmakers,” she noted. “But if they joined with several other labor unions, plus religious organizations, plus Some allies on the other side of the aisle? now These lawmakers will be nervous. “If the government won’t do it, the people have to force the government to do it. You have to start with a statement of principles.”

“If there’s one statement I’m going to make about the whole thing, which is what I told the group when I brought it to their attention, it’s that no one is going to engineer a pro-human movement. The only thing you can do is inspire it,” Allen said. “I think statements like this should inspire a pro-human movement. Like a seminal document that sets the tone… There’s no amount of social engineering, or money, or the media, or any of that, that’s really going to do that.”

However, the exact form of this remains unclear – or at least, it cannot be easily translated into elections. FLI runs an advertising campaign called “Protecting Humanity,” but as a 501(c)3, it cannot endorse candidates, their campaigns, or ballot initiatives during the midterm elections. However, they did conduct a survey with Tavern Research in FebruaryThis constitutes a test of the popularity of the Declaration’s principles among voters. Although survey respondents were closely divided by which party they voted for and which party they belonged to, they overwhelmingly supported the statements that appeared in the ad, by a wide margin. The worst-performing principles – that AI should not create monopolies or concentrate control in the hands of a few – were supported by 69% of respondents. The best-performing principle – that humans need to remain steward of AI and prevent it from harming children, families and communities – received 80% support.

For Javorsky, the results of the poll confirmed the validity of the conference’s points. “It’s one thing to have a whole bunch of civil society actors in a room together and thinking about something. But you actually have to validate them with real people. That actually resonates with them.”

When we spoke on Thursday, Anthropy, which was recently introduced The possibility that his AI has gained consciousnessIt was in the midst of a battle with the Pentagon over whether the military could use its artificial intelligence for autonomous lethal weapons without human oversight. By Friday evening, OpenAI had thrown Anthropic under the bus for scoring its own Pentagon contract. Days after that battle ended, the United States used human-powered tools to assassinate Iran’s Ayatollah, several more reports emerged of impending AI layoffs, and the scale of the Pentagon’s requests for mass surveillance became clearer, said Alan Minsky, CEO of the US Democratic Progressive Party and an attendee at the meeting. Edge He does not expect any political opposition to the announcement, whether from the left or the right.

“Altman and Musk have, certainly, taken a blunt approach to what poses serious threats to societies: the psychological deterioration of populations that increasingly live online, the impact of persistent economic misdistribution of wealth, and of course a disdain for the idea that basic protections should come before profits,” he said. “The threat of an existential threat to humanity is no longer something they even blink at. While the general public realizes that this is their position, and that they have complete contempt for the well-being of the average person – yes, we believe the public will be on our side.”