Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

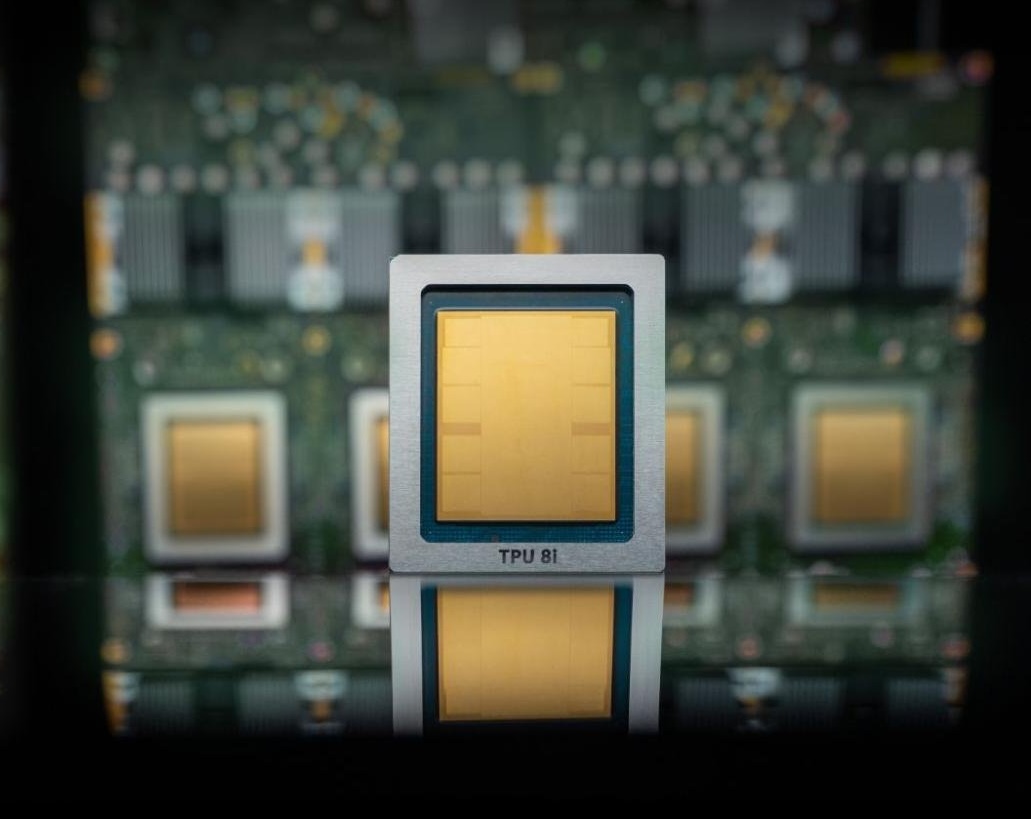

Google Cloud on Wednesday Announce The eighth generation of custom-designed AI chips, or tensor processing units (TPUs), will be split into two parts. One chip, called the TPU 8t, will be equipped for model training while another chip, called the TPU 8i, is aimed at inference.

Inference is the ongoing use of forms, aka what happens after users submit prompts.

As you might expect, The company pursues Some of the impressive performance specs of these new TPUs compared to previous generations: up to 3x faster training of AI models, 80% better performance per dollar, and the ability to have over a million TPUs working together in a single cluster. The result should be more compute for much less power — and cost to customers — than previous versions. These chips are called TPUs, not GPUs, because their custom low-power chips were originally called Tensor.

But Google’s chips aren’t a full-blown direct attack on Nvidia’s future, at least not yet. Like other giant cloud providers, including Microsoft And AmazonGoogle uses these chips to complement the Nvidia-based systems it offers in its infrastructure. It’s not a stable replacement for Nvidia. In fact, Google promises that its cloud will contain Nvidia’s latest chipset, the Vera Rubin, available later this year.

One day, companies that are hyperscalers building their own AI chips (which include Amazon, Microsoft, and Google) may increasingly need Nvidia, as enterprises move their AI needs to their clouds and move their applications to those chips.

However, in the current situation, it is not profitable to bet against Nvidia. As a leading analyst of the chip market Patrick Moorhead jokingly posted on XHe predicted Google’s TPU would be bad news for Nvidia (and Intel) back in 2016 when the search giant launched its first product. Nvidia is now a company with a market cap of nearly $5 trillion, which means this prediction hasn’t exactly stood the test of time.

If all goes according to Nvidia’s plan, Google’s growth as a cloud AI provider will lead to more business for the chipmaker, even if there are plenty of workloads on Google’s chips.

TechCrunch event

San Francisco, California

|

October 13-15, 2026

In fact, Google also says it has agreed to work with Nvidia to design computer networks that allow Nvidia-based systems to perform more efficiently in its cloud. In particular, the two tech giants are promoting a software-based networking technology called Falcon, Created by Google and open sourced in 2023 Under the godfather of all open source data center hardware organizations, the Open the calculation project.

When you make a purchase through the links in our articles, We may earn a small commission. This does not affect our editorial independence.