Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

AI mode from Google, Google Experimental Search feature The company has announced the issuance of multiple -parts with artificial intelligence interface The annual developer conference, Google I/O 2025Tuesday.

The feature depends on the current research experience on behalf of artificial intelligence, which is an overview of artificial intelligence View the summaries created by artificial intelligence At the top of the search results page. It was launched last year, an artificial intelligence overview witnessed mixed results. Amnesty International also offered from Google from Google Doubled answers And advice, like a A proposal to use glue on the pizzaAmong other things.

However, Google claims an overview of Amnesty International is success in terms of adoption, if not accurate, as more than 1.5 billion monthly users used artificial intelligence feature. The company says that it will now leave the laboratories, expand to more than 200 countries and regions, and become available in more than 40 languages.

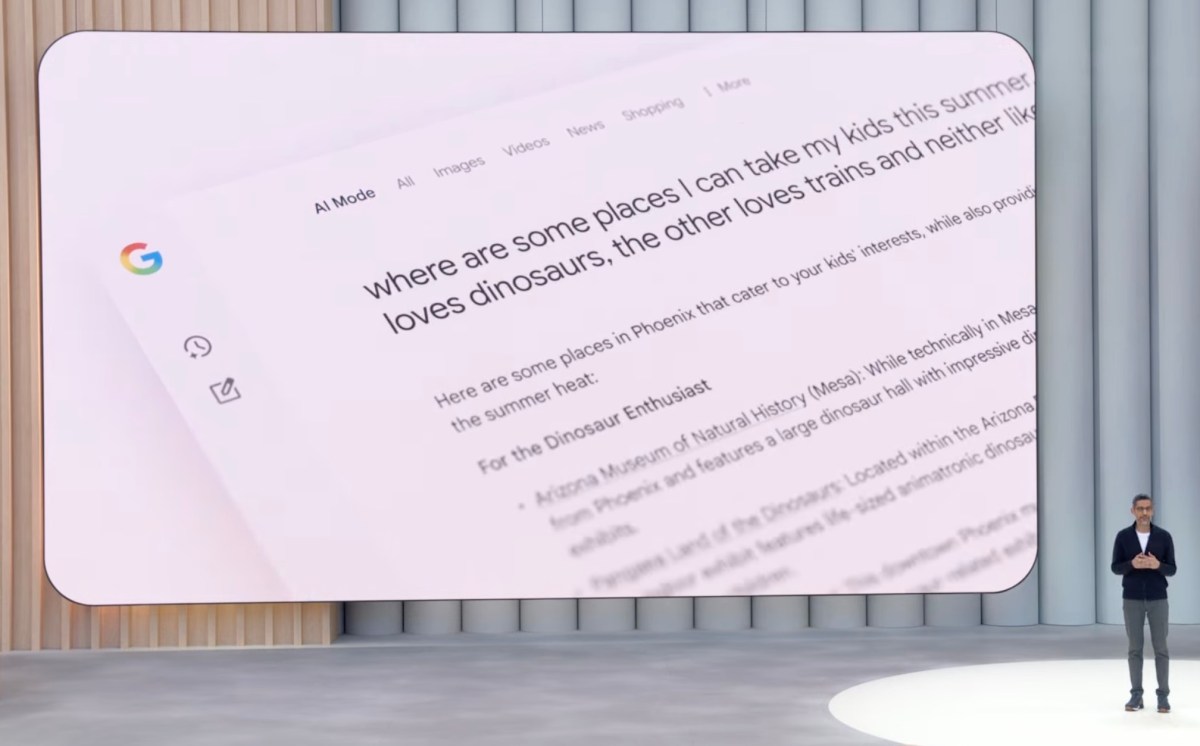

Meanwhile, AI’s position allows users to ask complicated questions and ask the follow -up. Initially available in Google Labs for Test, the feature reached when other artificial intelligence companies, such as Perplexity and Openai, expanded to Google with their web search features. Anxiety about the potential search market share for competitors, the artificial intelligence mode is the Google stadium when the future of the research will appear.

When artificial intelligence mode is operated on a wider scale, Google promotes some of its new capabilities, including deep search. Although artificial intelligence mode takes a question and divides it into different sub -groups to answer your query, deep research does this widely. Dozens or even hundreds of inquiries can be issued to provide your answers, which will also include links so that you can search in searching yourself.

The result is a fully cited report created in minutes, which may provide you with hours of search, says Google.

The company suggested using the deep search feature for things such as comparative shopping, whether for a large home device or a summer camp for children.

last Shopping feature that works in the IQ of artificial intelligence Access to AI Mode is a “Try IT On” clothes, which uses an image uploaded to yourself to create a picture of yourself wearing the concerned element. The feature will have an understanding of three -dimensional shapes, fabrics, and Google notes, and will start launching search laboratories today.

In the coming months, Google says it will do Offer This will purchase elements on your behalf after you reach a specific price. (You still have to click “buy for me” to start this agent.)

Both an artificial intelligence overview and artificial intelligence mode will use a dedicated version of Gemini 2.5, and Google says that Mode AI capabilities will be published gradually to an artificial intelligence overview over time.

Artificial intelligence mode will also support the use of complex data in mathematical information and financing, available through laboratories at some point “soon”. This allows users to ask complicated questions – such as “Compare between Phillies and White Sox Home Game percentage according to the year during the past five seasons.” Artificial intelligence will search through multiple sources, and put these data together in one answer, and even create perceptions while flying to help you better understand the data.

Another feature that enhances the Mariner project, Google agent who can interact with the web to take action on your behalf. It will provide you with an artificial intelligence mode available in principle for information that includes restaurants, events and other local services, and saving time searching for prices and their availability through multiple sites to find the best option – such as reasonable prices tickets, for example.

Search live, and expulsion later this summer, will allow you to ask questions based on what your phone camera sees in actual time. This exceeds the visual search possibilities of Google lenses, where you can have an interactive conversation with AI using both video and audio, similar to Google’s multi -media system, Astra Project.

Search results will also be customized based on your previous searches, and if you choose to connect your Google applications using a feature that will be published this summer. For example, if you connect your Gmail, your Google can know your travel dates from an email to confirm the reservation, then use this to recommend the events in the city you visit will happen while you are there. (With the expectation of some privacy assets, Google notes that you can connect your applications or separate them at any time.)

Gmail is the first application to be supported with a dedicated context, as the company notes.