Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Evolution of global regulations Aiming to reduce children’s access to harmful content, as well as accidents Lawsuits related to youth online safetyprompted Roblox to review How to deal with the accounts of minors Verify users’ ages.

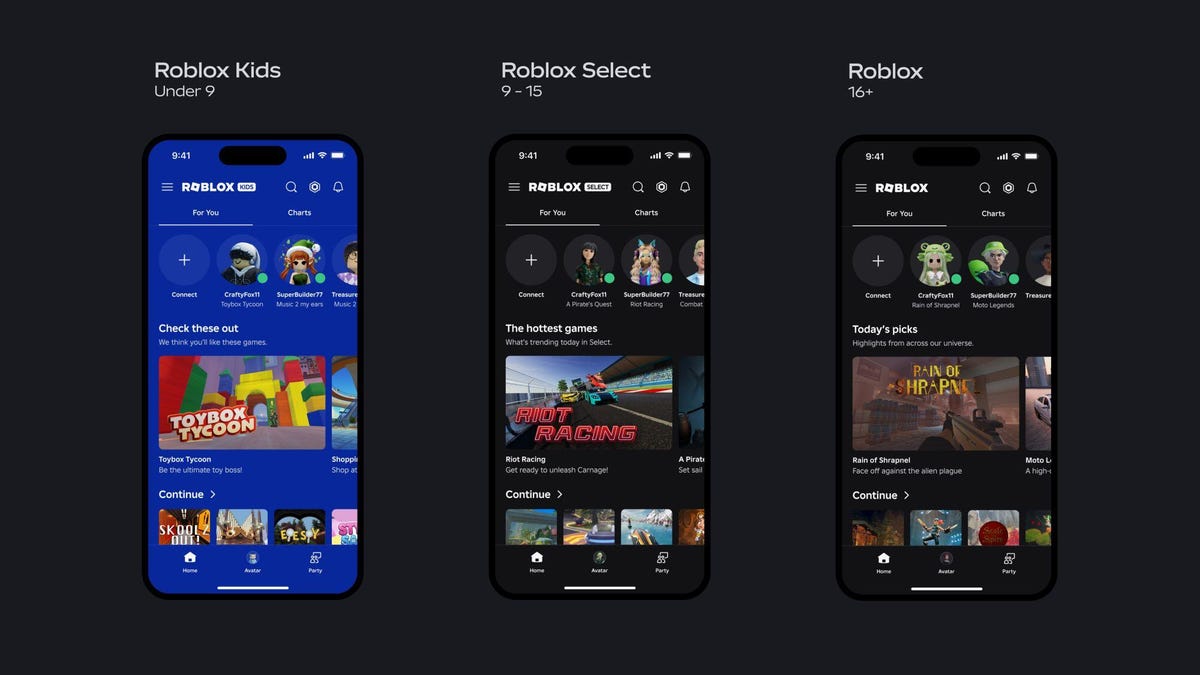

Starting in early June, the popular online gaming platform plans to group its younger users into two new types of accounts: “Roblox Kids” for those ages 5 to 8, and “Roblox Select” for those ages 9 to 15. And everyone else who is 16 or older will be in an age group called “Roblox.”

Kids and Select accounts will have distinct background handles across Roblox apps to indicate the type of account being used, the company said. Accounts will be assigned to age groups as determined by the Platform’s global age verification technology or by an authorized parent.

David Baszucki, CEO and founder of Roblox, explains in detail Changes to blog post on Monday, including restrictions on each account type and parental control options that will soon be available on Roblox.

“When it comes to safety, we’re doing the right thing, including proactive filtering, age verification, parental controls, and providing clear content ratings, because the well-being of our community is our top priority,” Baszucki said in the post.

The chat function has been a particular point of criticism against Roblox, which has faced scrutiny and lawsuits related to online grooming, when adult predators contact minors through uncensored chats. In one Recent high-profile case in the UKa 19-year-old man contacted a 14-year-old girl via Roblox chat, then encouraged her to move to other messaging platforms, where he continued to engage in “highly sexualized” conversations and shared intimate photos and videos.

As part of the new age groups, basic chat for children’s accounts will be turned off by default, and access will be limited to minimum or average games. Content maturity label. For selected accounts, chat communications will be “gradually introduced with safeguards,” and access to mid-level games will be limited Content maturity labels.

As more scrutiny is placed on social media and gaming platforms that attract younger audiences, many countries and states have introduced laws requiring platforms to verify users’ ages, often requiring government-issued IDs or parental consent to create accounts.

Companies like Discord, OpenAI, and Google-owned YouTube are taking different approaches to introducing age verification technology. Some use artificial intelligence to guess users’ ages to ensure young people are not exposed to inappropriate content or contact.

Disagreement, for example presented its concept to verify the ages of users on its platform, something it says it already does automatically, but which has been met with significant backlash. The ultimate company Age verification requirements delayed.

Part of the challenge in implementing age verification technology is avoiding disrupting platform engagement. Some companies face hurdles in creating systems that are difficult to fool or bypass and comply with regulations across regions. Age verification laws are also in place It faces opposition from privacy and freedom of expression activistswho say such regulations could easily violate First Amendment protections and pose severe privacy risks.