Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

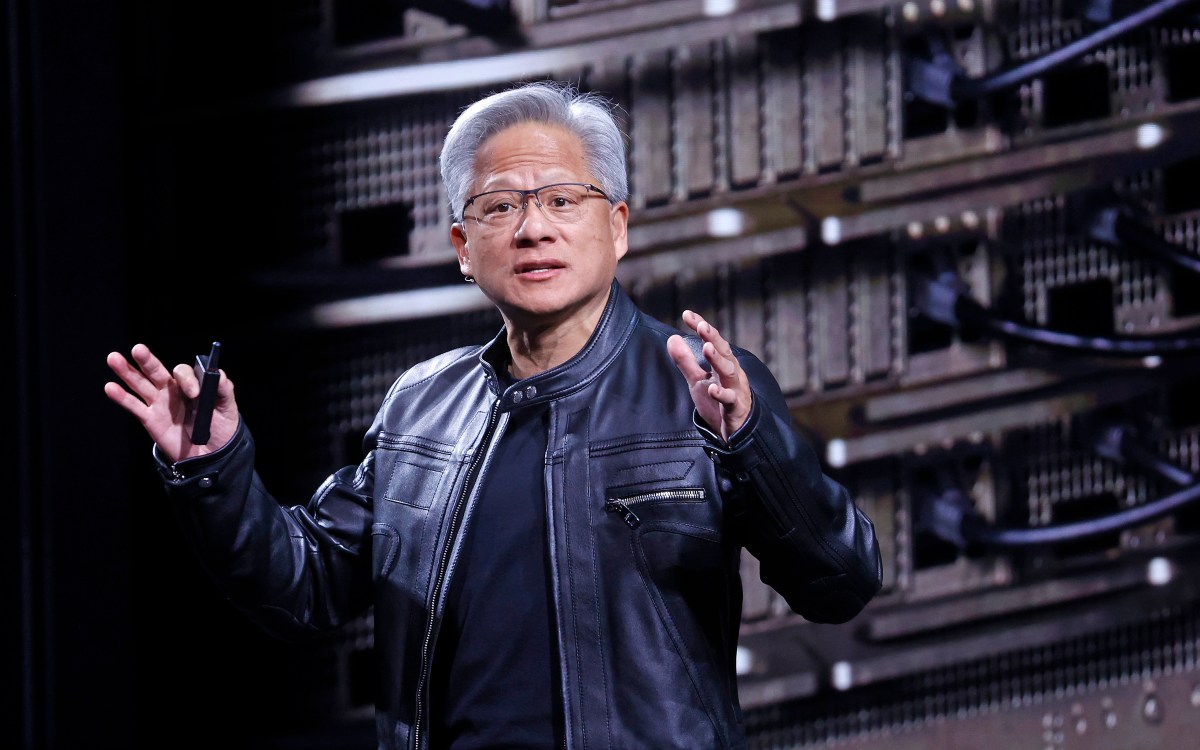

Today at the Consumer Electronics Show, Nvidia CEO Jensen Huang officially launched the company’s new Rubin computing architecture, which he described as the state-of-the-art in AI hardware. The new architecture is currently in production and is expected to ramp up further in the second half of the year.

“Vera Rubin is designed to address this fundamental challenge we face: the amount of computation needed for artificial intelligence is increasing exponentially.” Huang told the audience. “Today, I can tell you that Vera Rubin is in full production.”

The Robin architecture that was first announced In 2024is the latest result of Nvidia’s relentless hardware development cycle, which has turned Nvidia into the most valuable company in the world. The Rubin architecture would replace the Blackwell architecture, which in turn replaced the Hopper and Lovelace architectures.

Rubin chips are already slated to be used by almost every major cloud service provider, including Nvidia’s high-profile partnerships with Anthropic, OpenAIand Amazon Web Services. Robin systems will also be used in The Blue Lion supercomputer from HPE and The next Doudna supercomputer At Lawrence Berkeley National Laboratory.

His name is L Astronomer Vera Florence Cooper Rubinthe Rubin architecture consists of six separate chips designed for use in concerts. The Rubin GPU stands at the center, but the architecture also addresses growing bottlenecks in storage and interconnection through new improvements in Bluefield and NVLink respectively. The architecture also includes a new Vera CPU, designed for logical reasoning.

Explaining the benefits of the new storage, Deon Harris, senior director of AI infrastructure solutions at Nvidia, pointed to the increasing cache-related memory requirements of modern AI systems.

“When you start enabling new types of workflows, like agent AI or long-running tasks, that puts a lot of pressure and demands on your KV cache,” Harris told reporters over the phone, referring to it. A memory system used by artificial intelligence models to condense inputs. “So we’ve introduced a new layer of storage that connects externally to the computing device, allowing you to scale your storage more efficiently.”

TechCrunch event

San Francisco

|

October 13-15, 2026

As expected, the new architecture also represents a significant advance in speed and power efficiency. According to Nvidia’s tests, the Rubin architecture will perform three and a half times faster than the previous Blackwell architecture on model training tasks and five times faster on inference tasks, reaching 50 petaflops. The new platform will also support eight times more inference computation per watt.

Robin’s new capabilities come amid intense competition to build AI infrastructure, which has seen AI labs and cloud providers scrambling to acquire Nvidia chips as well as the facilities needed to run them. In an October 2025 earnings call, Huang estimated as much Between $3 trillion and $4 trillion It will be spent on AI infrastructure over the next five years.