Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

As dead bosses On trial in New Mexico for allegedly failing to protect minors from sexual exploitation, the company is making a strong effort to exclude certain information from court proceedings.

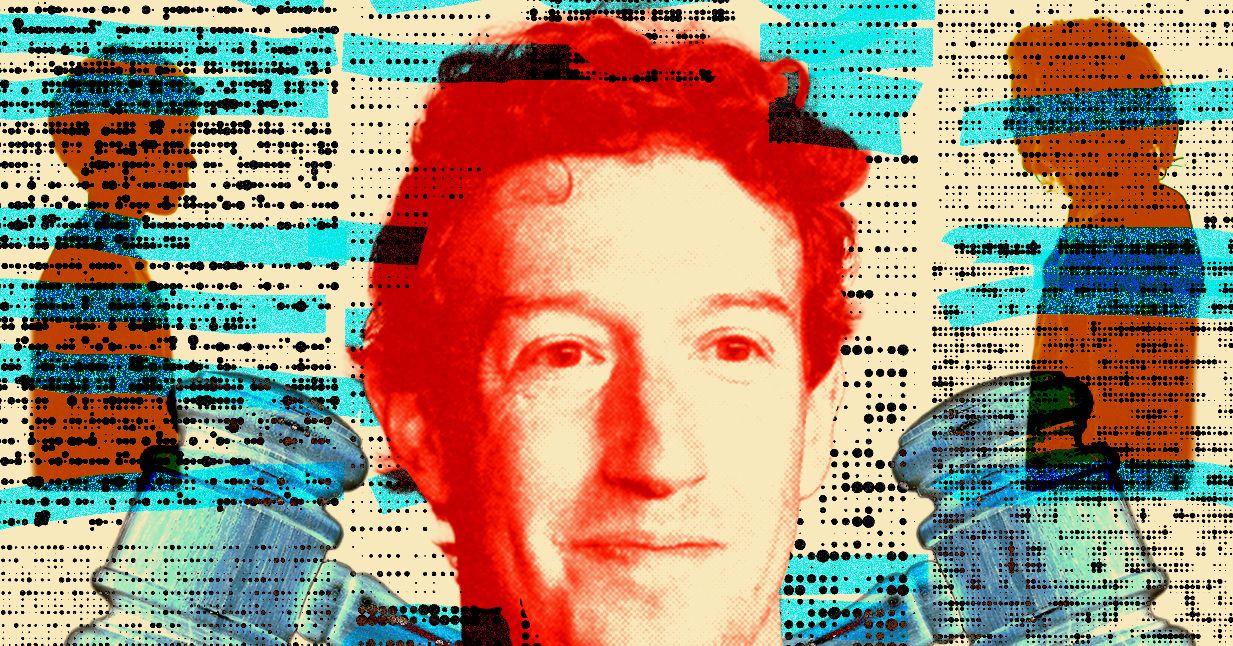

The company has petitioned a judge to exclude certain research studies and articles about social media and youth mental health; Any mention of a recent high-profile case involving teen suicide and social media content; and any references to Meta’s finances, employees’ personal activities, and Mark Zuckerberg’s time as a student at Harvard.

Meta motions to exclude information, known as motions in lien, are a standard part of pretrial proceedings, where a party can ask a judge to predetermine what evidence or arguments will be allowed in court. This is to ensure that the jury is presented with facts and not irrelevant or prejudicial information and to ensure that the defendant receives a fair trial.

Meta asserted in pretrial motions that the only questions that should be asked of the jury are whether Meta violated New Mexico’s Unfair Practices Act because of the way she allegedly handled child safety and youth mental health, and that other information — such as Meta’s alleged election interference and misinformation, or privacy violations — should not be taken into consideration.

But some of the requests seem unusually aggressive, two legal researchers told WIRED, including requests that the court not mention the company’s AI-powered chatbots and the overall reputational protections that Meta is seeking. WIRED was able to review Meta’s requests in border applications through a public records request from New Mexico courts.

The orders are part of a landmark case brought by New Mexico Attorney General Raul Torrez in late 2023. The state alleges Meta failed to protect minors from online solicitation, human trafficking and sexual assault on its platforms. It alleges that the company proactively served pornographic content to minors on its apps and failed to enact certain child safety measures.

State complaint Details how its investigators easily created fake Facebook and Instagram accounts posing as underage girls, and how these accounts quickly sent explicit messages and displayed algorithmically amplified pornographic content. In another test case mentioned in the complaint, investigators created a fake account as a mother looking to traffic her young daughter. According to the complaint, Meta did not report suggestive remarks made by other users on its posts, nor did it close some accounts that were reported to be violating Meta’s policies.

Meta spokesperson Aaron Simpson told WIRED via email that for more than a decade, the company has listened to parents, experts, and law enforcement, and conducted in-depth research, to “understand the issues that matter most,” and “use those insights to make meaningful changes — like offering teen accounts with built-in protections and providing parents with the tools to manage their teens’ experiences.”

“While New Mexico makes inflammatory, irrelevant and distracting arguments, we are focused on demonstrating our long-standing commitment to supporting youth,” Simpson said. “We are proud of the progress we have made, and are always working to do better.”

In her motions leading up to the New Mexico trial, Meta asked the court to exclude any references to general advice published by Vivek Murthy, the former U.S. Surgeon General, about social media and youth mental health. It also asked the court to exclude an editorial written by Murthy and Murthy for social media Warning sign. Meta argues that the former Surgeon General’s statements treating social media companies as a monolith are “irrelevant, unacceptable and unjustifiably biased hearsay.”