Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

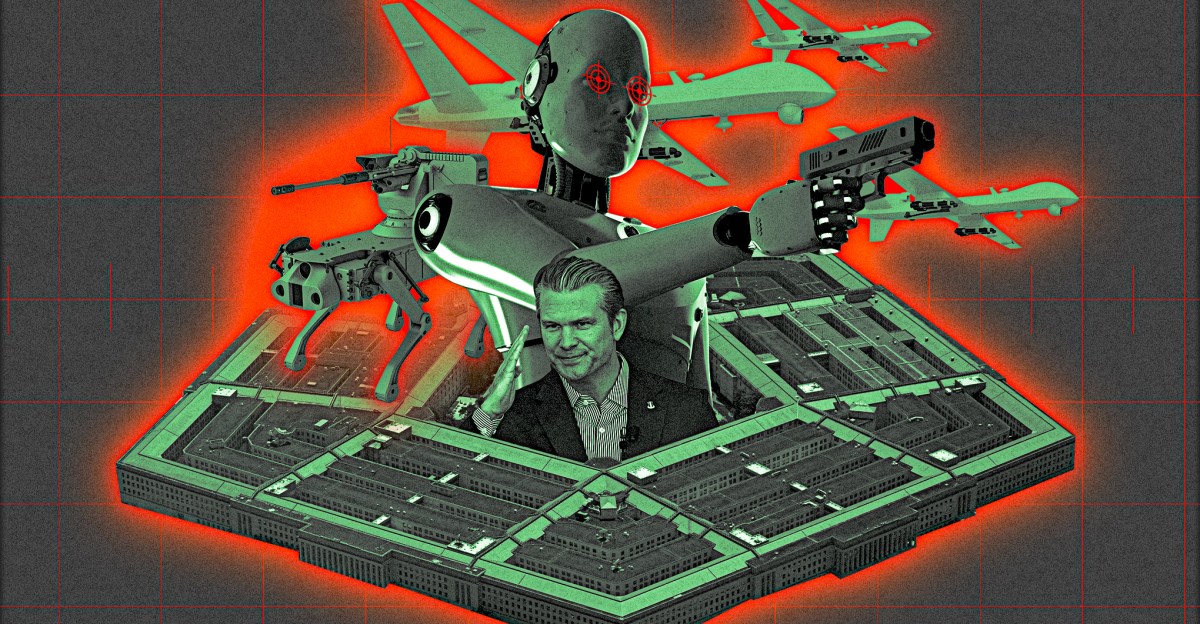

Anthropic’s weeks-long battle with the Defense Department has revolved around social media posts, warning public statements, and direct quotes from unnamed Pentagon officials to the media. But the future of the $380 billion AI startup boils down to just three words: “any legal use.” New terms, which OpenAI and xAI have It is said Already approved, it would give the US military carte blanche to use mass surveillance services, autonomous lethal weapons, and artificial intelligence with full authority to track and kill targets without humans involved in the decision-making process.

The negotiations have turned ugly, with Emil Michael, the Pentagon’s chief technology officer, who was previously a top executive at Uber, leading the government’s threats to label Anthropic a “supply chain risk,” according to two people familiar with the negotiations. This classification is typically reserved for threats to national security, including malicious foreign influence or cyberwarfare. Anthropic CEO Dario Amodei will do just that It is said He met with Secretary Pete Hegseth on Tuesday at the Pentagon, and an unnamed Defense Department official described it as a “frivolous or trivial meeting.”

For the Pentagon to issue this threat to an American company is unprecedented. But the Pentagon publicly This threat version is even more bizarre.

For security purposes, the Pentagon does not publicly disclose the companies on these lists, much less publicly threaten those companies if they do not agree with their views. Indeed, said Jeffrey Geertz, a senior fellow at the Center for a New American Security (CNAS). Edge Which Under current federal regulations The Pentagon could have classified Anthropology as a risk without ever informing the public or saying why. “It’s an additional step of trying to specifically classify it as a national security risk, preventing other companies from doing business with Anthropic, and that goes beyond here.”

The conflict revolves around Anthropic’s enforcement of its Acceptable Use Policy.

If the designation becomes official, it will end Anthropic’s $200 million contract with the Pentagon, but it will have a more devastating ripple effect on Anthropic’s overall bottom line. Defense contractors and major technology companies, such as AWS, Palantir, and Anduril, use Anthropic’s Claude in their work for the Pentagon, since it was the first AI model that was cleared to use classified information. To put it more bluntly: If Anthropic were labeled a “supply chain risk,” any company currently working with the military or hoping to land a military contract would have to give up Anthropic’s AI systems, which are believed to be some of the best in the industry. (The night before the scheduled meeting between Amodei and Hegseth, the Pentagon confirmed this You have signed an agreement to use Grokthe Elon Musk’s controversial artificial intelligence model xAI,in classified systems. The Pentagon did not have an immediate response after a request for comment.)

This can be done in a very narrow sense, or in a very broad sense. “I think the most logical explanation is the narrower definition, which is that anthropology cannot be used as part of a specific work statement for the Pentagon,” Gertz said. “But based on some reports and efforts to make this look like a punitive move against Anthropics, it’s worth considering both scenarios.”

Although the Pentagon and its media allies have mounted a campaign to label Anthropology as “woke,” they have yet to make any real accusations about security vulnerabilities or the potential for espionage. Instead, the clash is over Anthropic’s enforcement of its Acceptable Use Policy, according to people familiar with the internal discussions.

A source familiar with the situation said, who requested anonymity due to the sensitive nature of the negotiations Edge Anthropic has been very clear to the government about its red lines, and that there are two narrow things the company will not agree to: autonomous kinetic operations and mass domestic surveillance. The source said the latter was due to the fact that “laws have not kept up with what AI can do” and that it may violate American civil liberties. As for lethal autonomous weapons, the source said the technology “doesn’t exist yet for fully autonomous weapons without humans in the loop.”

Hamza Chowdhury, lead for AI and national security at the Future of Life Institute, a nonpartisan research group focused on AI governance, noted that Anthropic’s red lines already reflect existing government guidance that has not been rescinded.

“DoD Directive 3000.09 requires that all autonomous weapons systems be designed so that commanders and operators are able to ‘exercise appropriate levels of human judgment over the use of force,’ and the Political Declaration on the Military Use of Artificial Intelligence launched by the US government and endorsed by 50 countries enshrines this principle,” he said. Edge On the text. “DoD Directive 5240.01, reinforced by provisions of the National Defense Authorization Act for Fiscal Year 2017 and the Trump-era Responsible AI Implementation Pathway, prohibits intelligence components from collecting information on U.S. persons except under specific legal authorities such as the Foreign Intelligence Surveillance Act or Title 50.

“Anthropic’s Acceptable Use Policy reflects these same lines, and until the Pentagon formally abandons, clarifies, or updates these policy positions, the big question is whether the company can be forced out of a policy to which the government itself has committed itself in principle.”

Negotiating on behalf of the Pentagon is Michael, a Trump appointee and undersecretary of defense for research and engineering, a position often described as the Pentagon’s chief technology officer. (The first source) described Michael, who built a strong reputation as Uber’s one-time chief business officer He bragged about conducting opposition research on journalistsAs a “tough negotiator”. (Michael was fired from Uber in 2017, after he conducted the company’s board Investigate company culture Sexual harassment, which he and several executives brought up during their visit to an escort bar in South Korea.)

“It’s really a matter of principle for Emil,” said another person familiar with the matter, saying that Michael was not happy about a private company trying to restrict the government’s use of its technology. It’s not clear whether the White House or David Sachs, the venture capitalist and AI and cryptocurrency czar, approved of Michael’s tough tactics in advance.

At present, Anthropic’s Acceptable Use Policy is built into a $200 million contract it signed with the Department of Defense last July. In its advertisement, the company mentioned the phrase “responsible artificial intelligence” five times. “At the heart of this work is our conviction that the most powerful technologies bear the greatest responsibility,” they wrote, noting that in the context of government, “where decisions affect millions and the stakes could not be higher,” responsibility was “essential” to ensure that the development of AI “promotes democratic values globally by maintaining technological leadership to protect against authoritarian abuse.”

“The designation would require every defense contractor seeking government work to certify that it has removed all human technology from its systems.”

But in January, Hegseth published a note Announcing that the Department will become an “AI-first” warfighting force across all components” and that “any lawful use” language must be incorporated into any contract for the purchase of AI services within 180 days, including existing guidance.

In Hegseth’s memo, he repeatedly stressed that the department would prioritize speed at all costs, writing that the country must “remove barriers to data sharing… (and) treat risk swaps, ‘equity,’ and other subjective matters as if we were at war.” He also said that when it comes to developing and piloting AI agents, the administration will integrate them “from campaign planning to serial execution,” as well as turn “intelligence into weapons in hours.”

Hegseth has repeatedly prioritized speed over safety and potential errors: “We must accept that the risks of not moving quickly enough outweigh the risks of incomplete alignment.” He later confirmed in the memo, writing that “responsible AI” would see major changes at the ministry, both on the battlefield and within the ranks of the military. “Diversity, equity, inclusion, and social ideology have no place in the Department of Labor,” he wrote, adding that the department “must also use models free of employment policy constraints that may limit lawful military applications.” Similar to Trump’s anti-‘woke AI’ Executive orderHegseth announced that the model’s objectivity criteria will be a new primary purchasing criterion for AI services.

OpenAI, xAI, and Google immediately renegotiated their $200 million contracts with the Pentagon to comply with Hegseth’s memo. But none of those companies’ models carry an Impact Level 6 security rating, meaning ChatGPT, Grok, and Gemini wouldn’t be able to immediately replace Claude if Anthropic was blacklisted — a single-vendor vulnerability that would backfire on the Pentagon.

“Cloud is the only leading AI model running on the Pentagon’s fully classified networks, deployed through Palantir’s AI platform and Amazon’s Top Secret Cloud, meaning it is at the center of the workflow that most other models don’t yet have access to,” Choudhury noted. “The designation would require every defense contractor seeking government work to certify that it has removed all human technology from its systems.”

This gave the humanitarian leverage in its clashes with the Pentagon, which became more intense after the company learned of this Its models were used in the arrest of Venezuelan President Nicolas Maduroin violation of their existing agreement.

Technically, Anthropic cannot attempt to coordinate or collaborate with other AI labs that are offered the new terms, even if they are open to approval, because that would conflict with federal procurement rules. But as the battle plays out in the public eye, tech workers, AI employees, and others who currently or previously work in the tech industry have expressed frustration that other companies aren’t fighting on the same terms as Anthropic. Others seem to think it will only be a matter of time before Anthropics gives in.

“It would be a really good time for (other labs) to say, ‘Wait, what are you doing with our technology?’” said William Fitzgerald, a former Google employee who now runs an advocacy firm called The Worker Agency. “The people in the AI labs have a lot of power. They’re smaller teams, and they’re still kind of shaping who they’re going to be… I think they can justify their assessments without military action. There are other ways you can run a business without killing people in your business model.”