Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

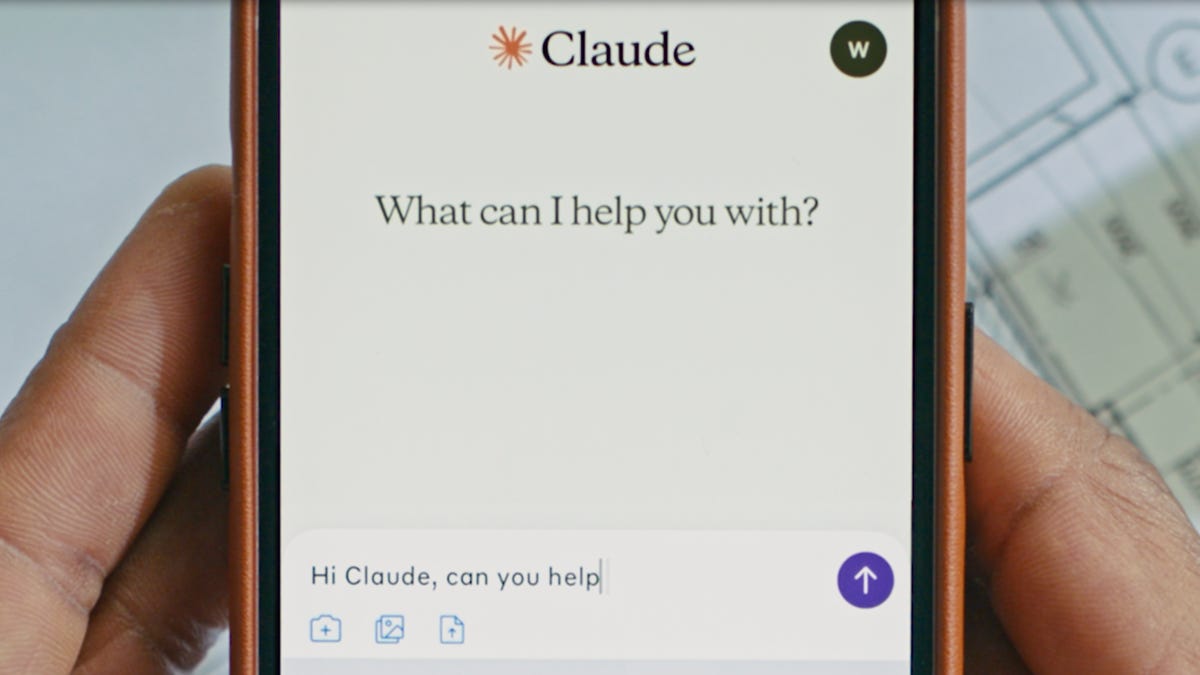

Antarbur announced A new experimental safety feature allows artificial intelligence models 4 and 4.1 to end the talks in rare, constantly harmful or abusive scenarios. This step reflects the increasing focus of the company on what you call “typical luxury”, which is the idea that protecting artificial intelligence systems, even if it is not emotional, may be a wise step in consensus and moral design.

Also read: Meta under fire was shown on artificial intelligence guidelines about “sensory” chats with minors

According to Anthropor’s private research, models were programmed to cut the dialogues after repeated harmful requests, such as sexual content that includes palace or instructions that facilitate terrorism – especially when artificial intelligence has already refused and tried to direct the conversation. Artificial intelligence may show what Antarbur describes as “phenomenon”, which directed the decision to give Claude the ability to end these reactions in the simulation test and the real user.

When this feature is run, users cannot send additional messages in that particular chat, although it is free to start a new conversation or edit previous messages and re -try the branch. Decally, other active conversations remain not affected.

man It confirms that this is the last return scale, which is meant only after the failure of multiple rejection and direction processes. The company explicitly loans Claude not to end the chats when the user is at risk of an imminent risk of self -harm or harm to others, especially when dealing with sensitive topics such as mental health.

Human tires This new ability as part of an exploratory project in typical luxury, a broader initiative that explores the remaining low -cost safety interventions in the event of developing artificial intelligence models any form of preferences or weaknesses.

The statement says that the company is still “very sure about the possible ethical state of Claude and other LLMS (large language models).”

Although this feature is rare, which mainly affects maximum cases, it represents a milestone in the anthropologist in the integrity of artificial intelligence. The end of the new conversation tool contrasts with the previous systems that focused only on protecting users or avoiding misuse.

Here, artificial intelligence himself is dealt with as an interest in itself, as Claude has the ability to say, “this conversation is not healthy” and ends it to protect the integrity of the model itself.

Anthropor’s approach has sparked a broader discussion on whether artificial intelligence systems should be granted protection to reduce possible “distress” or unexpected behavior. While some critics argue that models are just artificial machines, others welcomes this step as an opportunity to raise a more serious discourse on the ethics alignment of artificial intelligence.

“We are dealing with this feature as an ongoing experience and we will continue to improve our approach,” the company He said.