Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

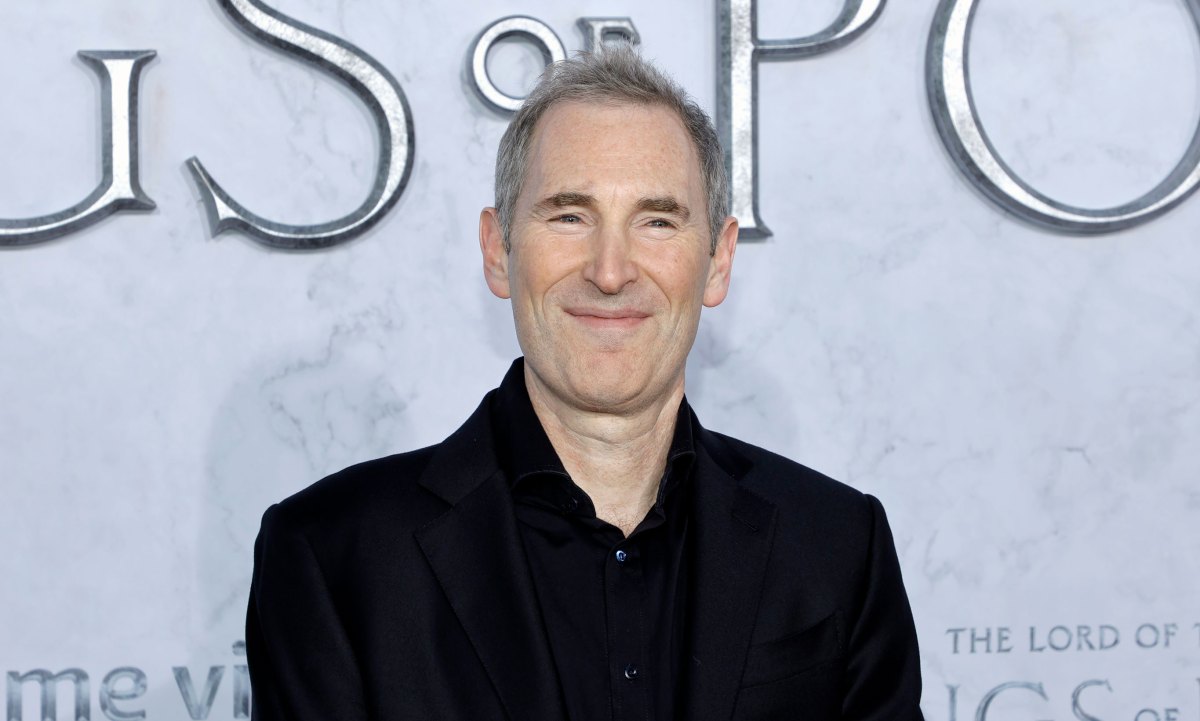

Can any company, big or small, unseat Nvidia’s AI chip dominance? Maybe not. But there are hundreds of billions of dollars in revenue for those who can even take some of it for themselves, Amazon CEO Andy Jassy said this week.

As expected, during the AWS Re:Invent conference, the company announced the next generation of Nvidia’s competing AI chip, the Trainium3, which is four times faster but uses less power than the current Trainium2. Jassy revealed some tidbits about the current Trainium In a post on X This explains why the company is optimistic about the chip.

He said Trainium2 “has huge traction, is a multi-billion dollar revenue company, has over a million chips in production, and over 100,000 companies use it as the majority of Bedrock’s use today.”

Bedrock is Amazon’s AI application development tool that allows businesses to choose from several AI models.

Amazon’s AI chip wins out among the company’s massive list of cloud customers because it “has price-performance advantages over other GPU options that are compelling,” Jassy said. In other words, it is believed to perform better and cost less than “other GPUs” on the market.

This is of course Amazon Classic mooffering its own local technology at lower prices.

Additionally, AWS CEO Matt Jarman provided more insight in an article Interview with CRNabout a single customer responsible for a significant portion of these billions in revenue: There’s no shock value here, it’s human.

TechCrunch event

San Francisco

|

October 13-15, 2026

“We’ve seen a tremendous amount of traction from Trainium2, particularly from our partners at Anthropic who announced Project Rainier, where there are over 500,000 Trainium2 chips helping them build the next generations of models for Claude,” Jarman said.

Project Rainier is Amazon’s most ambitious AI server cluster, spread across multiple data centers in the US and designed to meet Anthropic’s growing needs. He came Online in October. Amazon, of course, a Major investor in Anthropic. In return, Anthropic has made AWS its primary model training partner, although Anthropic is now also available on Microsoft’s cloud. Via Nvidia chips.

OpenAI now also uses AWS in addition to Microsoft’s cloud. But the OpenAI partnership wouldn’t have contributed much to Trainium’s revenue because AWS runs it on Nvidia chips and systems. The cloud giant said.

In fact, only a handful of US companies like Google, Microsoft, Amazon, and Meta have all the engineering chops — the expertise in silicon chip design, high-speed intercom and networking technology — to even try to truly compete with Nvidia. (Remember, Nvidia cornered the market on one of its key high-performance networking technologies in 2019 when CEO Jensen Huang bid Intel and Microsoft acquire device maker Infiniband Mellanox.)

Furthermore, the AI models and software designed to be served by Nvidia chips also rely on Nvidia’s Compute Unified Device Architecture (CUDA) software. CUDA allows applications to use GPUs for parallel processing among other tasks. Just like yesterday’s Intel vs. SPARC chip war, this is no easy matter Rewriting an AI implementation for a non-CUDA chip.

However, Amazon may have a plan for that. As mentioned earlier, the next-generation AI chip, Trainium4, will be designed to interface with Nvidia GPUs in the same system. Whether that helps drive more business away from Nvidia or simply strengthens its dominance, however, on the AWS cloud, remains to be seen.

Amazon may not matter. If it’s already on track to make billions of dollars from the Trainium2 chip, and the next generation will be much better, it might be winner enough.