Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

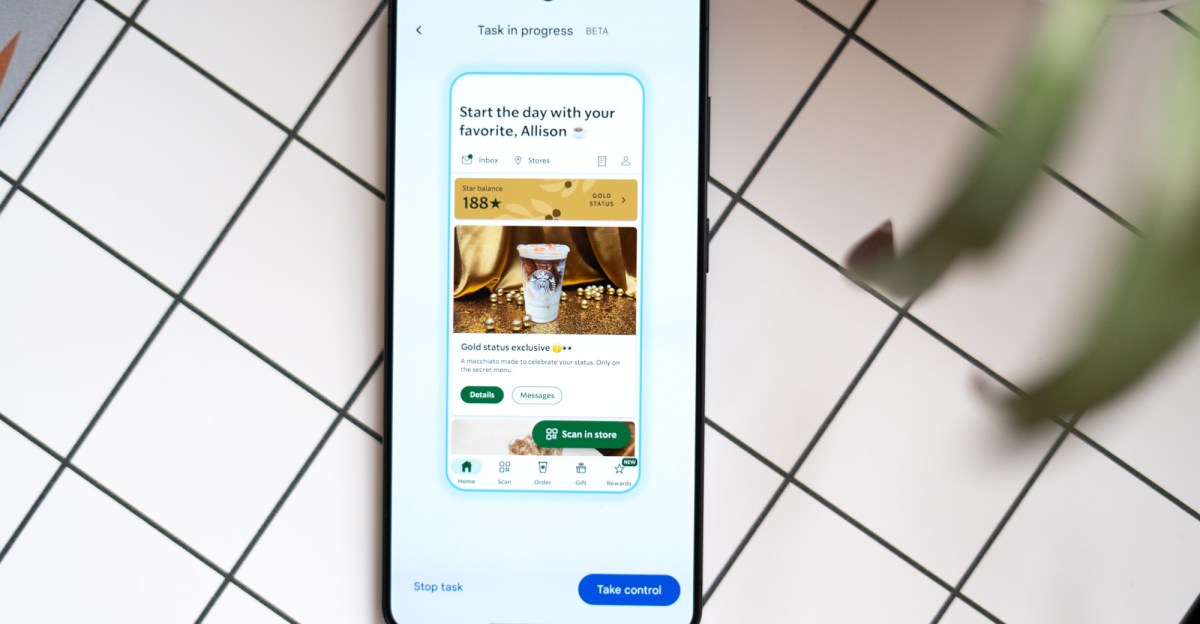

It has been tested Automate new tasks in Gemini On the Pixel 10 Pro and Galaxy S26 Ultra, which for the first time lets Gemini take the lead and use apps for you. It’s limited to a small subset at the moment — a few food delivery and ride-sharing services — and is still in beta. It’s slow, annoying at times, and doesn’t solve any serious problem you encounter while using your phone. But it’s very impressive, and I don’t think it’s an exaggeration to say that this is a glimpse into the future. We still have a long way to go, but this is the first time I’ve seen a real AI assistant actually working on a phone — and not in a carefully controlled keynote or demo inside a conference room.

First: Gemini is much slower than you, me, or anyone else at using their phones. If you need to order an Uber Right this secondYou are still the best person for the job. But before you write it off, remember that task automation is designed to run in the background while you do other things on your phone. Better yet, it keeps working while you’re there no Looking at your phone, so you can do things like check your passport is in your bag for the tenth time.

But if you’re curious, like me, you can watch the whole thing happen. As you work, text appears at the bottom of the screen indicating what Gemini is doing. Things like “choose a second portion of teriyaki chicken for the combo,” which is what happened when I directed him to order dinner on Saturday night. Watching Gemini figure things out quickly is kind of rules honestly. I ordered a chicken combo platter. The menu offered options in half-serving increments, so it correctly added two servings of chicken.

It’s best that when you start automation with Gemini, the default behavior is to run it in the background. You have to click a button and open another window if you want to watch Gemini work through the mission. It can be painful. While you’re watching the computer, trying to find a veggie side on the menu at Uber Eats when you Sitting there at the top of the screen It’s like watching a horror movie and knowing that the killer is in the closet next to the protagonist. I mean, except for the killing part. Gemini made a few wrong turns when putting together my teriyaki order, which he eventually figured out on his own, but the entire episode took about nine minutes. Not perfect.

Gemini is supposed to carry out your task until the point when it’s time to press confirm and order your car or dinner so you can double-check its work. I think this is the only reasonable way to use this feature at the moment, and I don’t mind the extra friction of completing an order. In my tests over the past five days, it has never gotten out of control and it completed my order for me. It is surprisingly accurate; I had to make very few adjustments to the final arrangement. If it fails — which I’ve seen happen several times — it’s usually within the first minute or two when something in the app needs my attention, like giving it permission to use my location, or changing my home delivery location instead of Nevada, which is the last place I used this app. I had to figure out what the problem was in such cases, but once it was resolved, I was able to restart the automation without a problem.

This is the one that really got me. I listed an event on my calendar for a trip to San Francisco the next day (fake trip to me, but real trip details). I gave Jiminy a vague prompt to schedule an Uber that would get me to the airport in time for my flight tomorrow. Since Gemini has access to my email and calendar, he can find that information. She needed a little extra guidance — perhaps because the trip wasn’t in my email like she expected. But still, she found the flight information, suggested leaving by 11:30 or 11:45 AM (a logical time for a 1:45 PM flight since I live near the airport), and asked if I wanted to schedule a flight at one of those times. I confirmed the time, and proceeded to set up the flight in about three minutes without further information on my part.

It’s a little impressive when you consider that Uber doesn’t even refer to it as Scheduling Journey – you Reserve a trip. This is the main difference between the digital assistants we use and the AI assistants that are emerging now. Ability to use natural language when speaking to a computer It makes a big difference when you control your smart home Or place your dinner order. If your computer is going to stumble and ask for clarification when you forget that a restaurant calls your meal a “dish” and not a “combo,” or if you order “slaw” instead of “shredded cabbage,” it’s no more useful than the assistants we’ve been using for the past decade to set timers and play music.

However, watching Gemini tap and swipe around Uber Eats makes one thing painfully clear: If you’re designing an app to use AI, it’s not going to look like the apps that exist today. You know, apps designed for humans. An AI assistant won’t be tempted by a big ad in the middle of the page to save 30 percent on your order. A delicious, well-prepared photo of the dish he orders is no more convincing than a low-quality photo. You’ll give her a database, not a bunch of clutter to get rid of — Something the industry is working towards In the Model Context Protocol, or MCP.

An AI model working its way through a human-centric interface seems like the most fragile and impractical way to order a pizza. He gets in the way sometimes, and he’s not good at letting you know Why Can’t do anything. This version of task automation seems to be a temporary solution until application developers adopt more powerful methods: MCP or… Android application functions. Samir Samat, head of Android at Google He told me recently Gemini takes the inference approach in the absence of the other two. Perhaps this version of task automation is our showcase of what’s possible, or a way to get developers to adopt one of the other approaches. Either way, this seems like a notable first step toward a new way of using our mobile assistants — one that’s strange, slow, but very promising.

Photography by Alison Johnson/The Verge