Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

Physical Address

304 North Cardinal St.

Dorchester Center, MA 02124

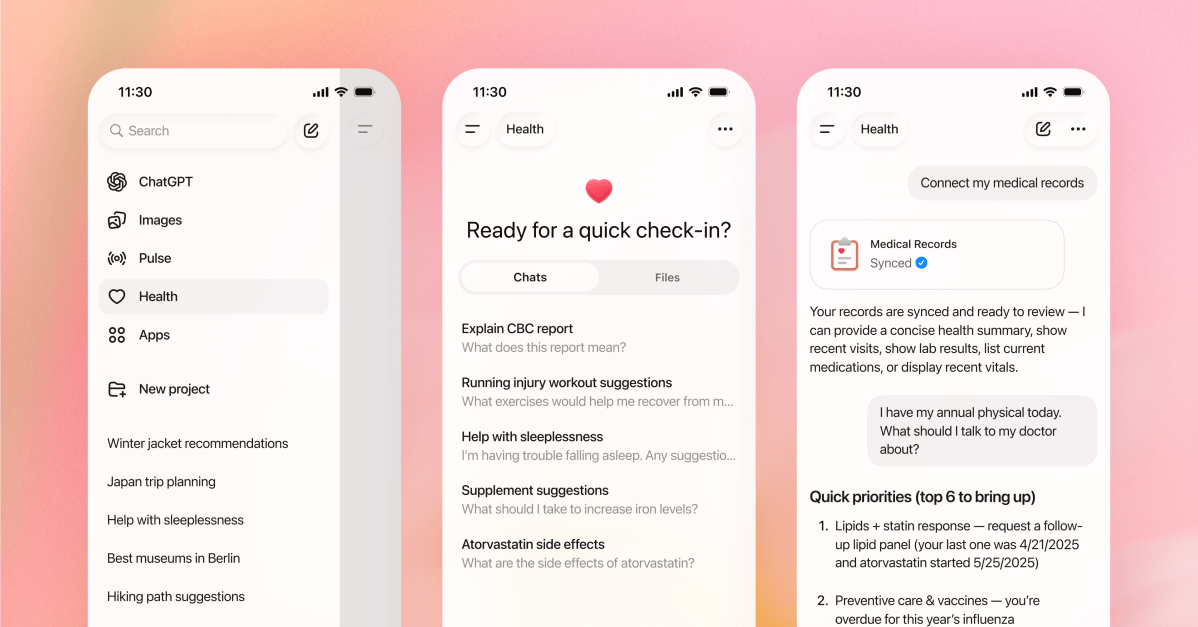

OpenAI has declined Hints This week it’s about the role of AI as a “healthcare ally” — and today the company is announcing a product in line with that idea: ChatGPT Health.

ChatGPT Health is a protected tab within ChatGPT designed for users to ask their health-related questions in what it describes as a more secure and personalized environment, with chat history and memory separate from the rest of ChatGPT. The company encourages users to connect their personal medical records and health apps, such as Apple Health, Peloton, MyFitnessPal, Weight Watchers, and Function, “to get more personalized and consistent answers to their questions.” It suggests linking medical records so ChatGPT can analyze lab results, visit summaries, and clinical history; MyFitnessPal and Weight Watchers for nutritional guidance; Apple Health for health and fitness data, including “movement, sleep, and activity patterns”; Function to gain insights into laboratory tests.

On the medical records front, OpenAI says it has partnered with b.well, which will provide back-end integration for users to upload their medical records, as the company works with about 2.2 million providers. Currently, ChatGPT Health requires users to register for waiting list To request access, it starts with a trial group of early users, but the product will be gradually rolled out to all users regardless of subscription level.

The company makes sure to point out in the blog post that ChatGPT Health is “not intended for diagnosis or treatment,” but it can’t fully control how people use the AI when they leave the chat. By the company Special admissionin underserved rural communities, users send nearly 600,000 healthcare-related messages weekly, on average, and seven out of every 10 healthcare conversations on ChatGPT “take place outside of a clinic’s regular business hours.” In August doctors Published a report In the case of a man who was hospitalized for weeks for a medical condition dating back to the 18th century after allegedly taking ChatGPT’s advice to replace salt in his diet with sodium bromide. Google’s AI Overview feature made headlines for weeks after its launch due to dangerous tips, like putting glue on pizza, and a recent investigation The Guardian Found This dangerous health advice continued, with false advice regarding liver function tests, gynecological cancer tests, and recommended diets for people with pancreatic cancer.

In a blog post, OpenAI wrote that based on “non-specific analysis of conversations,” more than 230 million people around the world already ask ChatGPT questions related to health and wellness every week. OpenAI also said that over the past two years it has worked with more than 260 doctors to provide feedback on model output more than 600,000 times in 30 focus areas, to help shape product responses.

“ChatGPT can help you understand recent test results, prepare for appointments with your doctor, get advice on how to handle your diet and exercise routine, or understand the tradeoffs between different insurance options based on your healthcare patterns,” OpenAI claims in the blog post.

One aspect of health that OpenAI seemed to carefully avoid mentioning in its blog post: mental health. there A number of examples Of adults or minors who die by suicide after trusting ChatGPT, and in the blog post, OpenAI stuck out a vague mention that users can customize instructions in the health product to “avoid mentioning sensitive topics.” When asked during a press conference on Wednesday if ChatGPT Health would also summarize mental health visits and provide advice in this area, Fidji Simo, chief applications officer at OpenAI, said, “Mental health is definitely a part of health in general, and we are seeing a lot of people turning to ChatGPT for mental health conversations,” adding that the new product “can handle any part of your health including mental health… We are very focused on making sure that in distress we respond accordingly and are directed toward health professionals as well.” Like loved ones or other resources.

It is also possible that the product may worsen health concerns, e.g Hypochondriasis. When asked if OpenAI had introduced any safeguards to help prevent people with such conditions from getting worse while using ChatGPT Health, Simo said: “We’ve done a lot of work on fine-tuning the model to make sure that we’re informed without ever being alarmist, and that if there’s action that needs to be taken, we direct it to the healthcare system.”

When it comes to security concerns, OpenAI says that ChatGPT Health “operates as a separate space with enhanced privacy to protect sensitive data” and that the company has provided several layers of encryption designed for this purpose (but not end-to-end encryption), according to the briefing. Conversations within the health product are not used to train its underlying models, by default, and if a user starts a health-related conversation in regular ChatGPT, the chatbot will suggest moving it to the health product for “additional protection,” according to the blog post. But OpenAI has had security breaches in the past, most notably A March 2023 The issue allowed some users to see chat titles, initial messages, names, email addresses, and payment information from other users. In the event of a court order, OpenAI will still need to provide access to data “where required by valid legal processes or in an emergency,” OpenAI health chief Nate Gross said during the press conference.

When asked if ChatGPT Health is compliant with the Health Insurance Portability and Accountability Act (HIPAA), Gross said that “in the case of consumer products, HIPAA does not apply in that setting — it applies to clinical or professional healthcare settings.”